Image Processing Experiments for Fire Plume Detection via Fixed HPWREN Cameras

This summary describes an exploratory HPWREN activity with its collaborators while not being a mainstream part of the project with dedicated resources. Continuation depends somewhat at the level of interest this generates.

Since HPWREN started to deploy cameras at its backbone sites in 2002 for persistent environmental observations (/cameras/), it had been approached numerous times about concepts and technologies for automated fire plume detection. For varieties of reasons this has not yet resulted in a broad technology adaptation, with one of the main reasons often being an expectation of continuous and inefficient video streams from the cameras being sent across a multi-purpose network to centralized servers. However, more recent technologies such as small edge computing devices with built-in neural networks start making it realistic to co-locate equipment at mountain-top backbone sites, which then allows to read image data from a local camera at a rate higher than HPWREN's typical image acquisition actions, process it, and filter it through its neural network to then signal the probability of an event.

This approach was initially suggested to HPWREN by John Graham of Calit2, and emphasized by Calit2's creator Larry Smarr responding with "training data for fire detection" in a discussion about potential uses for the huge multi-hundred terabyte long-term HPWREN camera image archive, which Calit2 significantly helps supporting.

Interpreting such data can then be used for fire plume detection and other observable phenomena on fixed cameras by creating a signaling process to alert responsible parties, or to modify equipment behavior, such as a higher image collection frame rate, or to provide event-targeting information to zoom-able PTZ cameras.

In addition, discussions with the LBNL Fuego project (https://fuego.ssl.berkeley.edu/smoke-detection/) had been valuable to see how other researchers approach an automated plume detection objective.

The reason for limiting this to fixed high-resolution cameras is due to the frames showing the same area in a persistent surround view, without anyone actually having moved the camera via PTZ controls. I.e., the cameras see fires at very early stages while making them trackable.

This summary describes just some experimental first steps, after considering limitations of plume and other event detection based on just individual images.

The HPWREN Fire Ignition image Library (FIgLib, /HPWREN-FIgLib/) is utilizing images from fixed cameras at HPWREN sites. The primary purpose is the creation of data sets for 40 minutes before and after past fire ignitions that can be used for neural network training. For illustration purposes video animations are included as well. The image file names are:

origin_timestamp _ offset_(sec)_from_visible_plume_appearance.jpg

The objective is to detect fires at early stages, hence the FIgLib data and its derivatives focus on the first 40 minutes following the ignition event, and not on the massiveness such fires can evolve to. Animations of large fires can be found at https://www.youtube.com/user/hpwren/videos.

The concept about using motion vectors across successive frames to add a temporal dimension was initially instigated by watching HPWREN fire videos run through a motion estimator demonstration program on an Nvidia Jetson Nano developer kit. While that process easily tags plumes at a very early stage, it also tags everything else that moves, and it will require an additional process, likely based of tensorflow via a neural network, to derive a probability of what is an actual plume versus what is not.

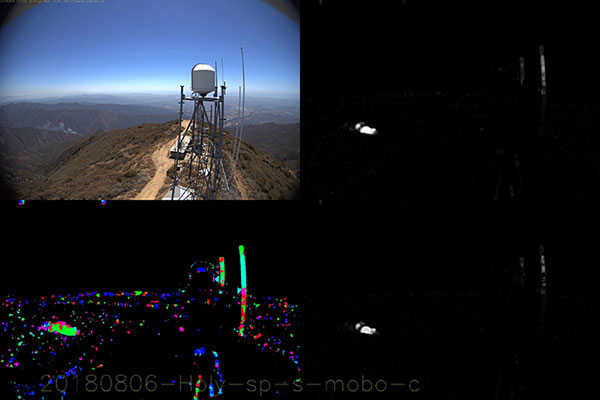

While experimenting with the Nvidia motion estimator provided a good starting point, Byungheon (Joseph) Jeong, a Calit2 PRP-REU student with John Graham, adapted a more flexible OpenCV approach based on the Chuan-en Lin (chuanenlin, Hong Kong, https://github.com/chuanenlin/optical-flow) optical flows code, and is available at https://gitlab.nautilus.optiputer.net/byungheon-jeong/optical-flow_farneback-method. This creates an animation with both the original camera images and the combined motion vectors stacked. Joseph's code was further modified by Jim Davidson (Valley Center Deputy Fire Marshal and long-time HPWREN collaborator) and Hans-Werner Braun (HPWREN) to separate the vectors into individual images of angles and velocity, and furthermore adds a delta-velocity image from successive velocity frame data.

Some or all of these three images per input frame, plus the original camera output, can then be used for further processing, such as for fire plume probabilities via neural networks. For the time being, this summary outlines the processing described so far, and adds a video illustration of the whole FIgLib archive as of the time of this writing. The in-progress software can be found at https://gitlab.nautilus.optiputer.net/ar-noc/mvec

The above image illustrates the August 6, 2018 Holy Jim Fire in Orange County at an early stage. The upper row shows the reduced-size original image plus velocities of the optical flows, while the lower row shows angles and delta-velocities. The complete FIgLib videos are available at https://www.youtube.com/watch?v=teFw9MprHnI for 20 frames per second, and https://www.youtube.com/watch?v=1YaO2wLnh6I at 4 fps.

Using the optical flows will allow us to use motion vectors to begin looking at behavior rather then just evaluating static images.

Next steps will be to filter one or multiple of the data components through neural networks, a good example being velocities or delta-velocities. Other work at Calit2 includes Po Hung (Spencer) Chen, an international summer research program student with John Graham, doing initial research into proposed deep learning platforms such as Keras and the Nvidia Deep Learning GPU Training System (DIGITS) retraining pipeline.

The California Institute for Telecommunications and Information Technology/Qualcomm Institute component of this work at the University of California San Diego was supported in part by NSF awards CNS-1730158, ACI-1540112, ACI-1541349, OAC-1826967, and the University of California Office of the President.